This article is a comprehensive walkthrough of the integration between the Axigen Mail Server and the Veritas Cluster Server, version 5.0. Specifically, this analysis provides anyone looking to integrate Axigen with the Veritas Cluster Suite with an expert opinion on the process itself and the steps to be taken for optimal, rewarding results.

Around two years ago the Axigen Mail Server began the Cluster Support Era with the final touches being added to the SMTP, POP3, IMAP and Webmail proxy services. Back in the day, our main concern was the Red Hat Clustering Suite included in the RHEL5 Linux distribution, developed and maintained by Red Hat, because it was (and still is) one of the most widespread clustering solutions, and also has a free of charge alternative built into CentOS.

However, now that the product has evolved and the product’s exposure to the market has grown considerably, a variety of clustering alternatives have to be supported to meet the demands and requirements of our customers. The year 2009 was very productive from the clustering integration standpoint, as the team had amassed quite a lot of knowledge of incalculable value.

Like most failover cluster packages, Veritas uses the basic building blocks of the “movable system services”. The cluster is made up of a bunch of systems, called nodes, some resources (like storage devices, IPs, shares and service instances), some zones and some rules by which service migration from one computer to another is governed.

Normally, the cluster installation should take some time, even for the more experienced users that do the preparation phase flawlessly. I was pleasantly surprised to find out that it is not the case with VCS5. Within a little under one hour, the entire cluster had been installed and ready to go, on my four cluster nodes, leaving me with the only the task of configuring it. The installation itself is pretty seamless, as the automatic installation wizard does its job without generating issues.

Another feature of the installer I also enjoy is the environment pre-check process that enables you to see whether you overlooked any details during your cluster preparation phase. This feature is also available in the Windows 2003 and 2008 failover cluster suite and is a welcome addition anytime; RHCS, however, is missing this functionality.

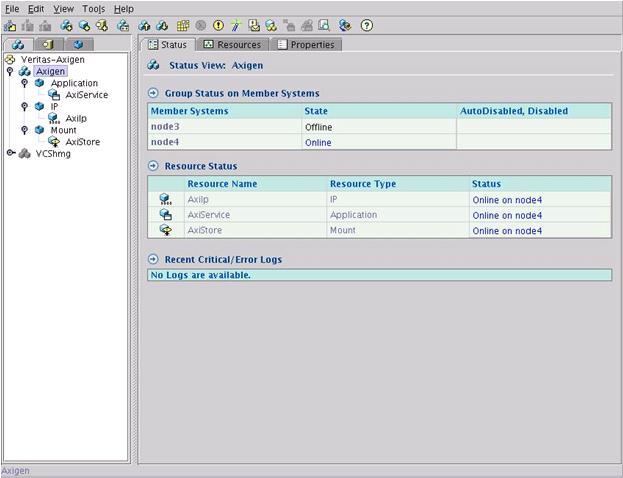

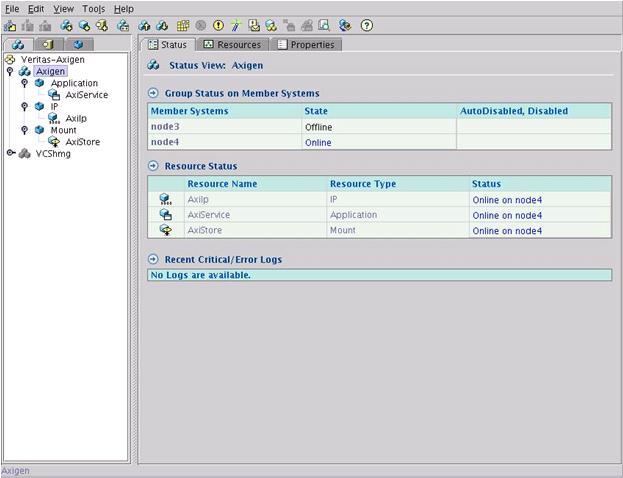

As you can see below, the screen-shot of my cluster setup, with the Axigen failover service configured to run on any of the two nodes (node3 and node4), clearly shows the basic components anyone should have while trying to integrate Axigen with VCS . This screen-shot depicts the VCS Cluster Explorer or “hagui”, which is written in JAVA, as far as I can tell:

That said, my cluster service group contains the following essential elements:

In this case, the Axigen init scripts should be edited to result in either 100, in case of startup failure, or 110, in case of success. Any other acceptable (“known”) values may be used but not to a great extent as the Axigen init scripts are not built with the concept of “confidence” in mind.

However, now that the product has evolved and the product’s exposure to the market has grown considerably, a variety of clustering alternatives have to be supported to meet the demands and requirements of our customers. The year 2009 was very productive from the clustering integration standpoint, as the team had amassed quite a lot of knowledge of incalculable value.

The Veritas Cluster Server version 5.0

Few are the experts in the IT&C industry that have not heard of the Symantec Veritas Cluster suite. It is one of the most advanced and reliable solutions on the market, partly because it has a lot of history behind it. Like most mature products, it has been polished to a great extent and the usability of the product is much higher than its younger counterparts. Nevertheless, for anyone that has not had any contact with the VCS suite before, the shocking ease of use and degree of automation embedded in the product will be a very pleasant surprise.Like most failover cluster packages, Veritas uses the basic building blocks of the “movable system services”. The cluster is made up of a bunch of systems, called nodes, some resources (like storage devices, IPs, shares and service instances), some zones and some rules by which service migration from one computer to another is governed.

Normally, the cluster installation should take some time, even for the more experienced users that do the preparation phase flawlessly. I was pleasantly surprised to find out that it is not the case with VCS5. Within a little under one hour, the entire cluster had been installed and ready to go, on my four cluster nodes, leaving me with the only the task of configuring it. The installation itself is pretty seamless, as the automatic installation wizard does its job without generating issues.

Another feature of the installer I also enjoy is the environment pre-check process that enables you to see whether you overlooked any details during your cluster preparation phase. This feature is also available in the Windows 2003 and 2008 failover cluster suite and is a welcome addition anytime; RHCS, however, is missing this functionality.

As you can see below, the screen-shot of my cluster setup, with the Axigen failover service configured to run on any of the two nodes (node3 and node4), clearly shows the basic components anyone should have while trying to integrate Axigen with VCS . This screen-shot depicts the VCS Cluster Explorer or “hagui”, which is written in JAVA, as far as I can tell:

That said, my cluster service group contains the following essential elements:

- The Axigen domain storage. The entire working directory of my Axigen server was set up on a shared disk that allowed any of the two cluster nodes to access it in turn. This way, I can allow any of the two cluster nodes to run an Axigen instance that has access to the same domain storage and the same user database.

- The Axigen service IP address. This is a very simple cluster resource and probably the most common. It is essentially the IP address that is assigned to the Axigen server no matter on which node it is currently running. This allows me to access the email services using a unified IP address, rather than checking every time if the service is running on the right node that I am trying to connect to.

- The Axigen service instance configuration. This little gimmick practically instructs the VCS monitors running on my nodes to run a specific program once the IP and the storage are loaded up. Surely, my application is the Axigen Mail Server, so the service configuration in my case points to the Axigen binary that starts the server up.

Notes & Caveats

The only thing that comes into mind, as a word of advice to anyone looking to integrate Axigen with the VCS suite, is the way the cluster service scripts are monitored for success or failure. Normally, the exit codes of the average BASH script should be 0 in the case of a successful run. In the case of Veritas, any exit code not within 100 and 110 is considered “unknown” and therefore cannot be validated. Here is a full list of exit codes that must be used for good results:- Less than 100: “unknown”

- Exactly 100: “offline”

- Between 101 and 110: “online with 10% - 100% confidence”

- More than 110: “unknown”

In this case, the Axigen init scripts should be edited to result in either 100, in case of startup failure, or 110, in case of success. Any other acceptable (“known”) values may be used but not to a great extent as the Axigen init scripts are not built with the concept of “confidence” in mind.