This guide covers the deployment of Elasticsearch and Kibana as Docker containers, providing the log storage and visualization backend for Axigen logs collected by Fluent Bit. If you prefer a traditional package-based installation, see our companion article on how to integrate Kibana with Elasticsearch for Axigen log visualization.

Overview

This is a companion article in the Axigen centralized logging series. The log collection part — installing and configuring Fluent Bit to collect Axigen logs and forward them to Elasticsearch — is covered in a separate article: set up Fluent Bit to collect Axigen logs and forward them to Elasticsearch.

If you prefer a traditional package-based installation instead of Docker, see how to install Kibana with Elasticsearch for Axigen logs.

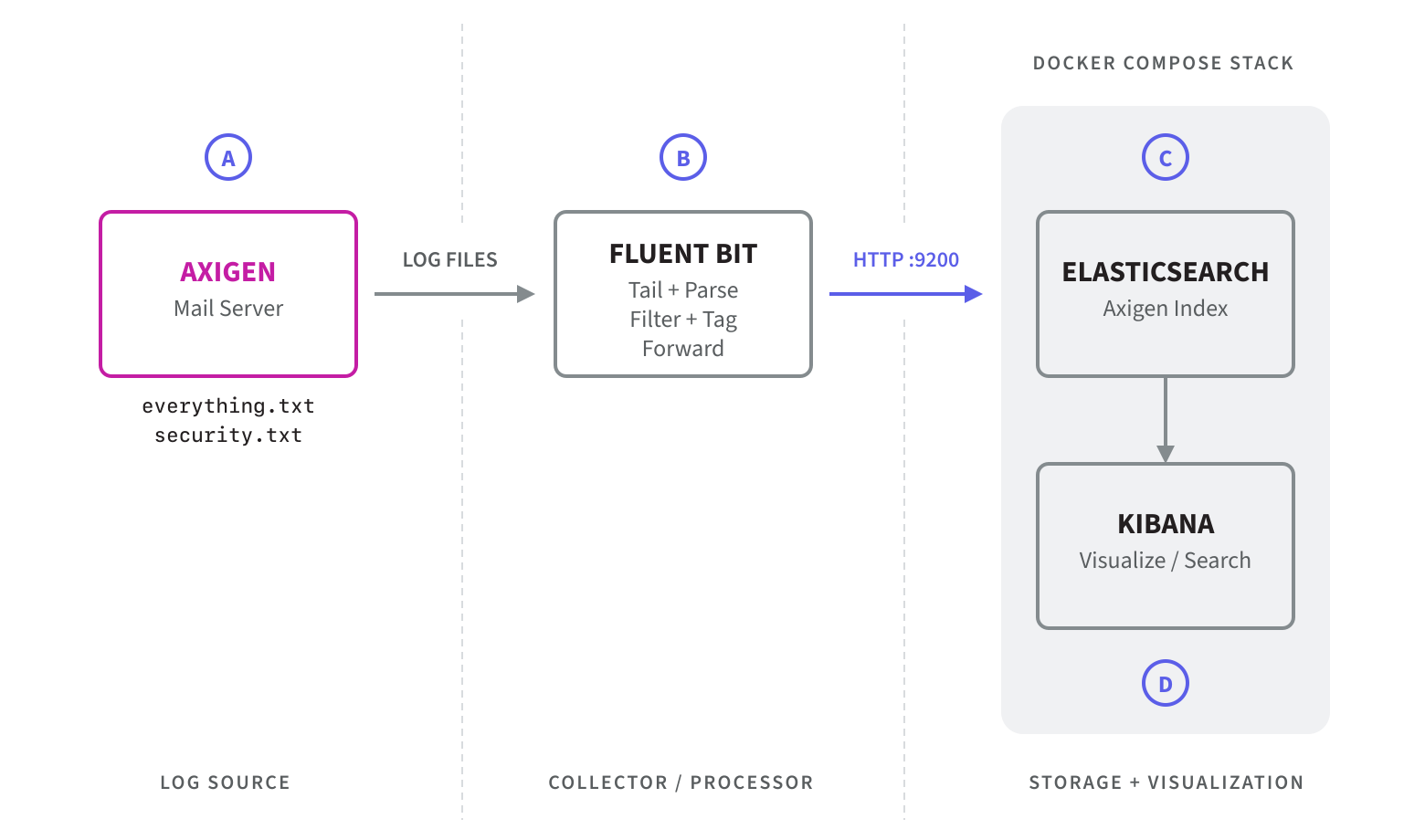

The diagram below illustrates the complete log flow, from Axigen through Fluent Bit to the Docker-based Elasticsearch and Kibana stack:

Prerequisites

- Docker and Docker Compose installed on the target host

- The configuration files described below, available in the same working directory

File Structure

The deployment uses four files:

- compose.yaml — defines the Docker services

- kibana-init.sh — initializes the kibana_system user password in Elasticsearch

- env.example — template for environment variables

- .env — your local copy with actual credentials (not committed to version control)

Component Overview

Elasticsearch

Elasticsearch runs as a single-node cluster with X-Pack security enabled. It stores the Axigen log data forwarded by Fluent Bit and exposes port 9200 for both inbound log ingestion and internal service communication.

Key settings:

- discovery.type=single-node — suitable for standalone deployments

- xpack.security.enabled=true — enforces authentication

- Memory limit: 4 GB

- Data persisted to a named Docker volume (es_data)

Kibana Init Container

The kibana-init service is a one-shot container that runs before Kibana starts. It performs the following tasks:

- Waits until Elasticsearch is reachable on port 9200

- Resets the password of the built-in kibana_system user — required because Elasticsearch assigns a random password on first boot, and Kibana needs a known credential to authenticate

- Checks whether the axigen index exists and creates it if needed — making the step idempotent and safe across container restarts

Kibana

Kibana connects to Elasticsearch using the kibana_system user and exposes the web UI on port 5601. It depends on both elastic and kibana-init, ensuring the password is set before Kibana attempts to authenticate.

Configuration Files

compose.yaml

log-stack:

driver: bridge

services:

elastic:

image: elasticsearch:8.19.12

container_name: elastic

deploy:

resources:

limits:

memory: 4g

environment:

- discovery.type=single-node

- network.host=0.0.0.0

- xpack.security.enabled=true

- ELASTIC_PASSWORD=${ELASTIC_USER_PASSWORD:-dummy-pass}

ports:

- "9200:9200"

volumes:

- es_data:/usr/share/elasticsearch/data

networks:

- log-stack

restart: unless-stopped

kibana-init:

image: elasticsearch:8.19.12

container_name: kibana-init

environment:

- ELASTIC_PASSWORD=${ELASTIC_USER_PASSWORD:-dummy-pass}

depends_on:

- elastic

volumes:

- "./kibana-init.sh:/kibana-init.sh:ro"

entrypoint: ["/bin/bash", "/kibana-init.sh"]

networks:

- log-stack

kibana:

image: kibana:8.19.12

container_name: kibana

environment:

- ELASTICSEARCH_HOSTS=http://elastic:9200

- ELASTICSEARCH_USERNAME=kibana_system

- ELASTICSEARCH_PASSWORD=${ELASTIC_USER_PASSWORD:-dummy-pass}

ports:

- 5601:5601

depends_on:

- elastic

- kibana-init

networks:

- log-stack

restart: unless-stopped

volumes:

es_data:

driver: local

kibana-init.sh

echo "Waiting for Elasticsearch to be ready..."

until curl -s -u elastic:${ELASTIC_PASSWORD} http://elastic:9200 >/dev/null; do

sleep 2;

done

echo "Elasticsearch is up, resetting kibana_system password..."

curl -L -s -X POST -u elastic:${ELASTIC_PASSWORD} \

-H "Content-Type: application/json" \

http://elastic:9200/_security/user/kibana_system/_password \

-d "{\\"password\\":\\"${ELASTIC_PASSWORD}\\"}"

retCode=$?

if [ ${retCode} -ne 0 ]; then

echo "Password set failed. Exiting init container."

exit 1

else

echo "Password set."

fi

echo "Checking if axigen index exists..."

HTTP_STATUS=$(curl -s -o /dev/null -w "%{http_code}" \

-u elastic:${ELASTIC_PASSWORD} \

http://elastic:9200/axigen)

if [ "${HTTP_STATUS}" -eq 200 ]; then

echo "axigen index already exists, skipping creation."

else

echo "Creating axigen index..."

curl -L -s -X PUT -u elastic:${ELASTIC_PASSWORD} \

-H "Content-Type: application/json" \

http://elastic:9200/axigen

retCode=$?

if [ ${retCode} -ne 0 ]; then

echo "Index creation failed. Exiting init container."

exit 1

else

echo "axigen index created successfully."

fi

fi

echo "Init complete. Exiting init container."

env.example

ELASTIC_USER_PASSWORD=your-password

Deployment Steps

Step 1: Create and Configure the Environment File

Copy the provided template and set a strong password for the elastic superuser:

cp env.example .env

Edit .env and replace the placeholder with your actual password:

Note: This password is used for the elastic superuser and will also be applied to the kibana_system user by the init container. The .env file should not be committed to version control.

Step 2: Start the Containers

docker compose up -d

Docker Compose will start the services in dependency order:

- elastic starts first

- kibana-init runs once Elasticsearch is up, sets the kibana_system password, creates the axigen index, then exits

- kibana starts after kibana-init completes

Step 3: Verify the Deployment

Check that Elasticsearch is responding:

curl -u elastic:your-strong-password-here http://localhost:9200

Open the Kibana UI in your browser:

http://<host-ip>:5601

Log in with username elastic and the password you configured in .env.

Connecting Fluent Bit to Elasticsearch

With Elasticsearch running, update the Fluent Bit output configuration on your Axigen host (as described in the Fluent Bit log collection setup) to point to this instance:

Name es

Host <elasticsearch-host-ip>

Port 9200

Index axigen

HTTP_User elastic

HTTP_Passwd your-strong-password-here

Time_Key_Nanos On

Suppress_Type_Name On

Match *

Replace <elasticsearch-host-ip> with the IP address or hostname of the Docker host running Elasticsearch.

Notes

- The kibana-init container exits after completing its task. This is expected and normal.

- The es_data Docker volume persists Elasticsearch data across container restarts.

- Both elastic and kibana are configured with restart: unless-stopped, so they will recover automatically after a host reboot.

Conclusion

You now have a fully containerized Elasticsearch and Kibana stack ready to receive and visualize Axigen logs. This Docker-based deployment offers a quick and reproducible way to set up log management infrastructure, with all configuration stored as code.

For the complete log collection pipeline, make sure Fluent Bit is configured to forward Axigen logs to this Elasticsearch instance, as described in our guide on how to set up Fluent Bit to collect Axigen logs and forward them to Elasticsearch. To extend your logging capabilities with real-time data streaming, see how to integrate Fluent Bit with Kafka for real-time Axigen log streaming.