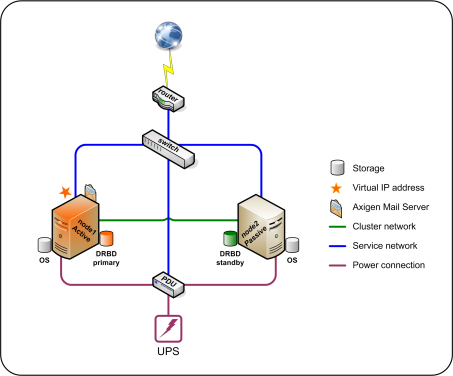

This document uses an example setup configuration for an Active - Passive two node cluster, explained below. Thus, the configuration options may refer to these particular resources.

Operating System

Although Pacemaker cluster resource manager, Corosync cluster engine and DRBD® (Distributed Replicated Block Device) are supported on many Linux flavors, this document may refer particularly to commands and instructions specific to CentOS 7 (from a minimal server installation)

Please check this document for information relevant to installation and configuration on CentOS 5.

IPs and DNS

Both nodes which will be part of the Active-Passive cluster have each one two Ethernet ports configured with the following IP addresses and FQDN:

-

Node1

-

Hostname = node1

-

node1.axilab.local = 192.168.1.91 / 24 on enp1s3

-

node1-ha.axilab.local = 10.9.9.91 / 24 on enp0s3

-

-

Node 2

-

Hostname = node2

-

node2.axilab.local = 192.168.1.92 / 24 on enp1s3

-

node2-ha.axilab.local = 10.9.9.92 / 24 on enp0s3

-

CentOS 7 provides methods for consistent and predictable network device naming for network interfaces.

These features change the name of network interfaces on a system (from traditionally eth[0123…]) in order to make locating and differentiating the interfaces easier.

The default is to assign fixed names based on firmware, topology, and location information. This has the advantage that the names are fully automatic, fully predictable, that they stay fixed even if hardware is added or removed (no re-enumeration takes place), and that broken hardware can be replaced seamlessly.

We will use enp0s3 interfaces only for DRBD and cluster services traffic and therefore connect them through an Ethernet cross-over cable. Axigen services (like SMTP, IMAP or HTTP) and management traffic (like SSH or controlling of the fencing device) will use enp1s3 interfaces.

The active node in the cluster will have assigned a floating IP address, 192.168.1.90, registered in the DNS with the name mail.axilab.local.

Storage

DRBD will need its own block device on each node. This can be a physical disk partition or logical volume. In our example each node has been configured with a partition, accessible as /dev/sdb1, which will hold the entire Axigen data directory on an ext4 file system. These partitions are being synchronized with DRBD.

Fence Device

The nodes are powered through an APC PDU (Power Distribution Unit) switch, available at the address 192.168.1.99. The fenceadm user will be used for power cycling the nodes, if considered necessary by the cluster suite.

Please note that additional node level fencing options are available:

-

for hardware based nodes (for example agents for HP and IBM blades, iLO or IPMI)

-

for nodes running in virtual environments (like VMware, XEN or KVM)

To get the complete list of available fencing agents the following command could be used: